This was the bit of Comp. Sci. I always thought looked uninteresting, but in fact when you delve in to it it’s really fascinating and dynamic. What we’re actually talking about here is implicit, strong type checking. Implicit

means there is not (usually) any need to declare the type of a variable or function, and strong

means that there is no possibility of a run-time type error (so there is no need for run-time type checking.)

Take a look at the following code snippet:

|

|

fn double(x) { x + x } def y = 10; def z = double(y); |

You and I can both infer a lot of information about double, x, y and z from that piece of code, if we just assume the normal meaning for +. If we assume that + is an operator on two integers, then we can infer that x is an integer, and therefore the argument to doublemust be an integer, and therefore y must be an integer. Likewise since + returns an integer, double must return an integer, and therefore z must be an integer (in most languages + etc. are overloaded to operate on either ints or floats, but we’ll ignore that for now.)

Before diving in to how we might implement our intuition, we need a formal way of describing types. For simple types like integers we can just say int, but functions and operators are just a bit more tricky. All we’re interested in are the argument types and the return type, so we can describe the type of + as:

I’m flying in the face of convention here, as most text books would write that as (int * int) → int. No, that * isn’t a typo, it is meant to be some cartesian operator for tuples of types, but I think it’s just confusing so I’ll stick with commas.

To pursue a more complex example, let’s take that adder function from a previous post:

|

|

fn adder(x) { fn(y) { x + y } } |

So adder is a function that takes an integer x and returns another function that takes an integer y and adds x to it, returning the result. We can infer that x and y are integers because of + just like above. We’re only interested for the moment in the formal type ofadder, which we can write as:

We’ll adopt the convention that → is right associative, so we don’t need parentheses.

Now for something much more tricky, the famous map function. Here it is again in F♮:

|

|

fn map { (f, []) { [] } (f, h @ t) { f(h) @ map(f, t) } } |

map takes a function and a list, applies the function to each element of the list, and returns a list of the results. Let’s assume as an example, that the function being passed to map is some sort of strlen. strlen‘s type is obviously:

so we can infer that in this case the argument list given to map must be a list of string, and that map must return a list of int:

|

|

(string → int, [string]) → [int] |

(using [x] as shorthand for list of x

). But what about mapping square over a list of int? In that case map would seem to have a different type signature:

|

|

(int → int, [int]) → [int] |

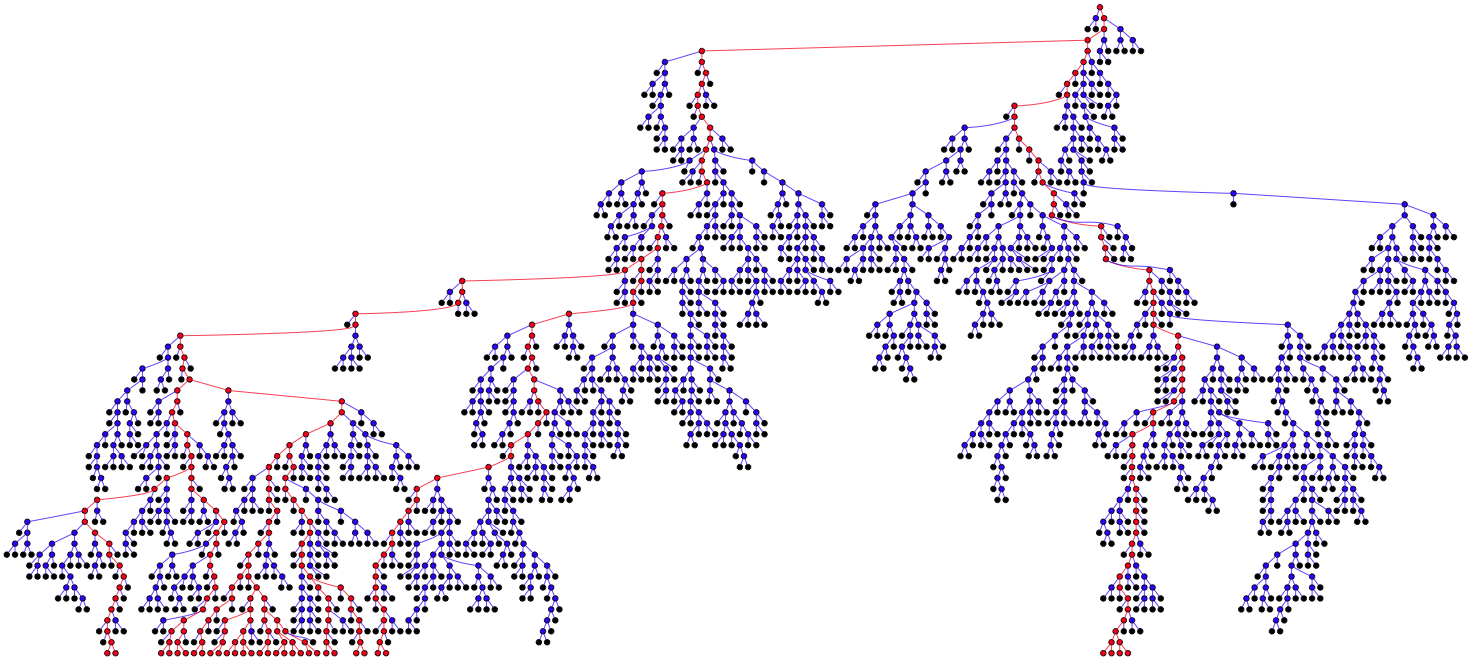

In fact, map doesn’t care that much about the types of its argument function and list, or the type of its return list, as long as the function and the lists themselves agree. map is said to be polymorphic. To properly describe the type of map we need to introducetype variables which can stand for some unspecified type. Then we can describe map verbally as taking as arguments a function that takes some type a and produces some type b, and a list of a, producing a list of b.

Formally this can be written:

|

|

(<a> → <b>, [<a>]) → [<b>] |

where <a> and <b> are type variables.

So, armed with our formalisms, how do we go about type checking the original example:

|

|

fn double(x) { x + x } def y = 10; def z = double(y); |

Part of the trick is to emulate evaluation of the code, but only a static evaluation (we don’t follow function calls). Assume that all we know initially is the type of +. We set up a global type environment, completely analogous to a normal interpreter environment, but mapping symbols to their types rather than to their values. So our type environment would look like:

|

|

{ '+' => (int, int) → int } |

On seeing the function declaration, before we even begin to inspect the function body, we can add another entry to our global environment, analogous to the def of double (we do this first in case the function is recursive):

|

|

{ '+' => (int, int) → int 'double' => <a> → <b> } |

Note that we are using type variables already, to stand for types we don’t know yet. Now the second part of the trick is that these type variables are actually logic variables that can unify with other data.

As we descend into the body of the function, we do something else analogous to evaluation: we extend the environment with a binding for x. But what do we bind x to? Well, we don’t know the value of x, but we do have a placeholder fot its type, namely the type variable <a>. We have a tiny choice to make here. Either we bind x to a new type variable and then unify that type variable with <a>, or we bind x directly to <a>. Since unifying two unassigned logic variables makes them the same logic variable, the outcome is the same:

|

|

{ 'x' => <a> } { '+' => (int, int) → int 'double' => <a> → <b> } |

With this extended type environment we descend into the body and come across the application of + to x and x.

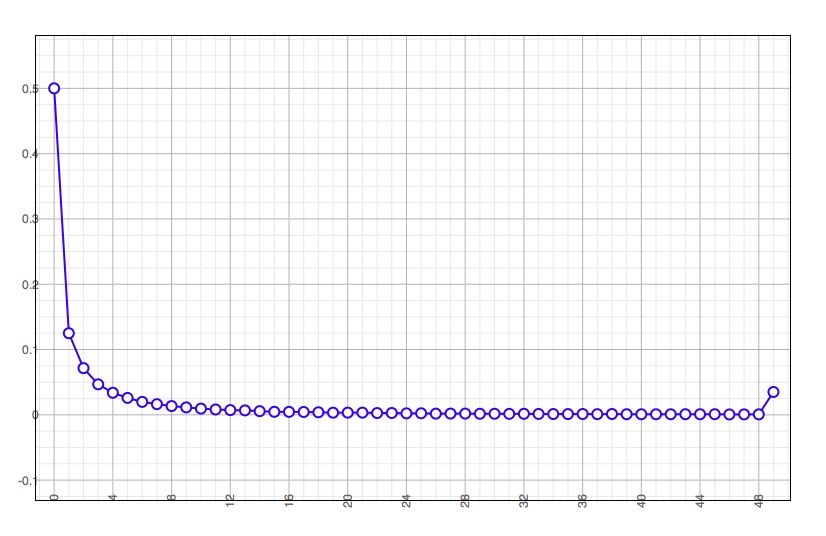

Pursuing the analogy with evaluation further, we evaluate the symbol x to get <a>. We know also that all operations return a value, so we can create another type variable <c> and build the structure (<a>, <a>) → <c>. We can now look up the type of + andunify the two types:

|

|

(<a>, <a>) → <c> (int, int) → int |

In case you’re not familiar with unification, we’ll step through it. Firstly <a> gets the value int:

|

|

(int, int) → <c> ^ (int, int) → int |

Next, because <a> is int, the second comparison succeeds:

|

|

(int, int) → <c> v (int, int) → int |

Finally, <c> is also unified with int:

|

|

(int, int) → int ^ (int, int) → int |

So <a> has taken on the value (and is now indistinguishable from) int. This means that our environment has changed:

|

|

{ 'x' => int } { '+' => (int, int) → int 'double' => int → <b> |

Now we know <c> (now int) is the result type of double, so on leaving double we unify that with <b>, and discard the extended environment. Our global environment now contains:

|

|

{ '+' => (int, int) → int 'double' => int → int } |

We have inferred the type of double!

Proceeding, we next encounter def y = 10;. That rather uninterestingly extends our global environment to:

|

|

{ '+' => (int, int) → int 'double' => int → int 'y' => int } |

Lastly we see the line def z = double(y);. Because of the def we immediately extend our environment with a binding of z to a new placeholder <d>:

|

|

{ '+' => (int, int) → int 'double' => int → int 'y' => int 'z' => <d> } |

We see the form of a function application, so we look up the value of the arguments and result and create the structure:

Next we look up the value of double and unify the two:

<d> gets the value int and our job is done, the code type checks successfully.

What if the types were wrong? suppose the code had said def y = "hello"? That would have resulted in the attempted unification:

That unification would fail and we would report a type error, without having even run the program!